Write-behind quickstart

This guide takes you through the creation of a write-behind pipeline.

Concepts

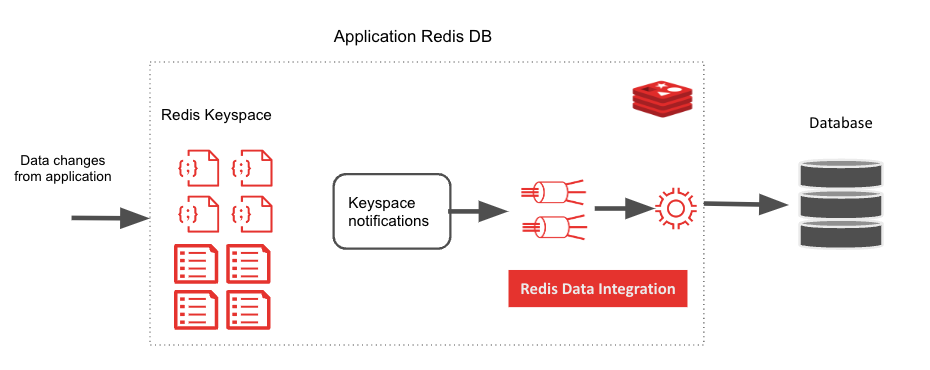

Write-behind is a RDI pipeline used to synchronize data in a Redis database with a downstream data store. You can think about it as a pipeline that starts with change data capture (CDC) events for a Redis database and then filters, transforms, and maps the data to the target data store data structures.

The target data store to which the write-behind pipeline connects and writes data.

The write-behind pipeline is composed of one or more jobs. Each job is responsible for capturing change for one key pattern in Redis and mapping it to one or more tables in the downstream data store. Each job is defined in a YAML file.

Supported data stores

RDI write-behind currently supports these target data stores:

| Data Store |

|---|

| Cassandra |

| MariaDB |

| MySQL |

| Oracle |

| PostgreSQL |

| Redis Enterprise |

| SQL Server |

Prerequisites

The only prerequisite for running RDI write-behind is Redis Gears Python >= 1.2.6 installed on the Redis Enterprise Cluster and enabled for the database you want to mirror to the downstream data store. For more information, see RedisGears installation.

Preparing the write-behind pipeline

Install RDI CLI on a Linux host that has connectivity to your Redis Enterprise Cluster.

Run the

configurecommand to install the RDI Engine on your Redis database, if you have not used this Redis database with RDI write-behind before.Run the

scaffoldcommand with the type of data store you want to use, for example:redis-di scaffold --strategy write_behind --dir . --db-type mysqlThis creates a template

config.yamlfile and a folder namedjobsunder the current directory. You can specify any folder name with--diror use the--preview config.yamloption, if your RDI CLI is deployed inside a Kubernetes (K8s) pod, to get theconfig.yamltemplate to the terminal.Add the connections required for downstream targets in the

connectionssection ofconfig.yaml, for example:connections: my-postgres: type: postgresql host: 172.17.0.3 port: 5432 database: postgres user: postgres password: postgres #query_args: # sslmode: verify-ca # sslrootcert: /opt/work/ssl/ca.crt # sslkey: /opt/work/ssl/client.key # sslcert: /opt/work/ssl/client.crt my-mysql: type: mysql host: 172.17.0.4 port: 3306 database: test user: test password: test #connect_args: # ssl_ca: /opt/ssl/ca.crt # ssl_cert: /opt/ssl/client.crt # ssl_key: /opt/ssl/client.keyThis is the first section of the

config.yamlfile and typically the only one you’ll need to edit. Theconnectionssection is designed to have many target connections. In the previous example, there are two downstream connections namedmy-postgresandmy-mysql.To obtain a secured connection using TLS, you can add more

connect_argsorquery_args, depending on the specific target database terminology, to the connection definition.The name can be any arbitrary name as long as it is:

- Unique for this RDI engine

- Referenced correctly by the jobs in the respective YAML files

In order to prepare the pipeline, fill in the correct information for the target data store. Secrets can be provided using a reference to a secret (see below) or by specifying a path.

The applier section has information about the batch size and frequency used to write data to the target.

Some of the applier attributes such as target_data_type, wait_enabled, and retry_on_replica_failure are specific for the RDI ingest pipeline and can be ignored.

Write-behind jobs

Write-behind jobs are a mandatory part of the write-behind pipeline configuration.

Under the jobs directory (parallel to config.yaml) you should have a job definition in a YAML file for every key pattern you want to write to a downstream database table.

The YAML file can be named using the destination table name or another naming convention, but it has to have a unique name.

Job definition has the following structure:

source:

redis:

key_pattern: emp:*

trigger: write-behind

exclude_commands: ["json.del"]

transform:

- uses: rename_field

with:

from_field: after.country

to_field: after.my_country

output:

- uses: relational.write

with:

connection: my-connection

schema: my-schema

table: my-table

keys:

- first_name

- last_name

mapping:

- first_name

- last_name

- address

- gender

Source section

The source section describes the source of data in the pipeline.

The redis section is common for every pipeline initiated by an event in Redis, such as applying changes to data. In the case of write-behind, it has the information required to activate a pipeline dealing with changes to data. It includes the following attributes:

The

key_patternattribute specifies the pattern of Redis keys to listen on. The pattern must correspond to keys that are of Hash or JSON type.The

exclude_commandsattribute specifies which commands to ignore. For example, if you listen on a key pattern with Hash values, you can exclude theHDELcommand so no data deletions will propagate to the downstream database. If you don’t specify this attribute, RDI write-behind acts on all relevant commands.The

triggerattribute is mandatory and must be set towrite-behind.The

row_formatattribute can be used with the valuefullto receive both thebeforeandaftersections of the payload. Note that for write-behind events thebeforevalue of the key is never provided.

Note: RDI write-behind does not support the

expiredevent. Therefore, keys that are expired in Redis will not be deleted from the target database automatically. Notes: Theredisattribute is a breaking change replacing thekeyspaceattribute. Thekey_patternattribute replaces thepatternattribute. Theexclude_commandsattributes replaces theexclude-commandsattribute. If you upgrade to version 0.105 and beyond, you must edit your existing jobs and redeploy them.

Output section

The output section is critical. It specifies a reference to a connection from the config.yaml connections section:

The

usesattribute specifies the type of writer RDI write-behind will use to prepare and write the data to the target. In this example, it isrelational.write, a writer that translates the data into a SQL statement with the specific dialect of the downstream relational database. For a full list of supported writers, see data transformation block types.The

schemaattribute specifies the schema/database to use (different database have different names for schema in the object hierarchy).The

tableattribute specifies the downstream table to use.The

keyssection specifies the field(s) in the table that are the unique constraints in that table.The

mappingsection is used to map database columns to Redis fields with different names or to expressions. The mapping can be all Redis data fields or a subset of them.

Note: The columns used in

keyswill be automatically included, so there’s no need to repeat them in themappingsection.

Apply filters and transformations to write-behind

The RDI write-behind jobs can apply filters and transformations to the data before it is written to the target. Specify the filters and transformations under the transform section.

Filters

Use filters to skip some of the data and not apply it to target. Filters can apply simple or complex expressions that take as arguments the Redis entry key, fields, and even the change op code (create, delete, update, etc.). See Filter for more information.

Transformations

Transformations manipulate the data in one of the following ways:

- Renaming a field

- Adding a field

- Removing a field

- Mapping source fields to use in output

To learn more about transformations, see data transformation pipeline.

Provide target’s secrets

The target’s secrets (such as TLS certificates) can be read from a path on the Redis node’s file system. This allows the consumption of secrets injected from secret stores.

Deploy the write-behind pipeline

To start the pipeline, run the deploy command:

redis-di deploy

You can check that the pipeline is running, receiving, and writing data using the status command:

redis-di status

Monitor the write-behind pipeline

The RDI write-behind pipeline collects the following metrics:

| Metric Description | Metric in Prometheus |

|---|---|

| Total incoming events by stream | Calculated as a Prometheus DB query: sum(pending, rejected, filtered, inserted, updated, deleted) |

| Created incoming events by stream | rdi_metrics_incoming_entries{data_source:"…",operation="inserted"} |

| Updated incoming events by stream | rdi_metrics_incoming_entries{data_source:"…",operation="updated"} |

| Deleted incoming events by stream | rdi_metrics_incoming_entries{data_source:"…",operation="deleted"} |

| Filtered incoming events by stream | rdi_metrics_incoming_entries{data_source:"…",operation="filtered"} |

| Malformed incoming events by stream | rdi_metrics_incoming_entries{data_source:"…",operation="rejected"} |

| Total events per stream (snapshot) | rdi_metrics_stream_size{data_source:""} |

| Time in stream (snapshot) | rdi_metrics_stream_last_latency_ms{data_source:"…"} |

To use the metrics you can either:

Run the

statuscommand:redis-di statusScrape the metrics using RDI’s Prometheus exporter

Upgrading

If you need to upgrade RDI, you should use the upgrade command that provides for a zero downtime upgrade:

redis-di upgrade ...

See the Upgrade guide for more information.